ClarkVision.com

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

DSLR vs Mirrorless Cameras

by Roger N. Clark

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

by Roger N. Clark

Some important things to consider when choosing between a DSLR and mirrorless camera include the autofocus abilities of the camera. Some photographers are enthralled with the new mirrorless technology but there are some significant limitations for some types of photography, especially action photography.

Many photographers are asking when to switch to a mirrorless camera. Some make claims that the DSLR is doomed and everyone will switch to mirrorless soon. There is a fundamental reason why DSLRs will stay around and be the choice for action photography: Phase detect Auto Focus, or PDAF.

Phase detect autofocus is a method for focusing that detects the light field from different parts of the lens using the out-of-focus image. In a DSLR, the mirror one sees when looking into the camera body (e.g. with no lens on the camera) is a partially transmitting mirror (a beamsplitter). Some light is reflected up to the pentaprism where the image is erected for presentation to the eye of the photographer, and where the AF rectangles are shown in the field of view. Also up there may be the light metering sensors. Light that passes through the beamsplitter mirror is reflected by another mirror that reflects light onto the AF sensors in the bottom of the camera body. The AF sensors work by placing two masks that block out light from the lens except for two areas on each side of the lens. Behind the little masks in the PDAF module are lenses that focus light from each side of the lens onto a linear array. The masks block all light from the lens except for the light from small zones from two different sides of the lens. This is explained in more detail with diagrams here. The PDAF module measures the light field and is able to determine the precise distance and direction of the focus with a single measurement.

The advantage of this system is the speed and accuracy, that with a single fast (millisecond or less) measurement of the phase of the light from the two sides of the lens, the precise distance and direction the lens is out of focus can be determined. The lens can then be commanded to move to that point without any additional checks. But with a second measurement (that again may take only a millisecond or less), the subject velocity and direction of movement can also be determined. Further, the phase detect system then determines where the moving subject will be when the shutter actually opens, accounting for delays in the mechanics of the system, including raising the mirror of a DSLR. This is called predictive autofocus (Canon calls this AI Servo).

There are two other technologies for focusing.

Contrast Detect Autofocus uses the sensor for autofocus using a contrast detection method (CDAF). The lens is moved, the sensor read out, and the contrast checked, the lens moved, the sensor read out, the contrast checked and if it is getting better keep moving in that direction, if not, go back, hunting for best focus. Each readout can take tens of milliseconds and because multiple readouts are required to hunt for focus, focus can take a large fraction of a second or longer.

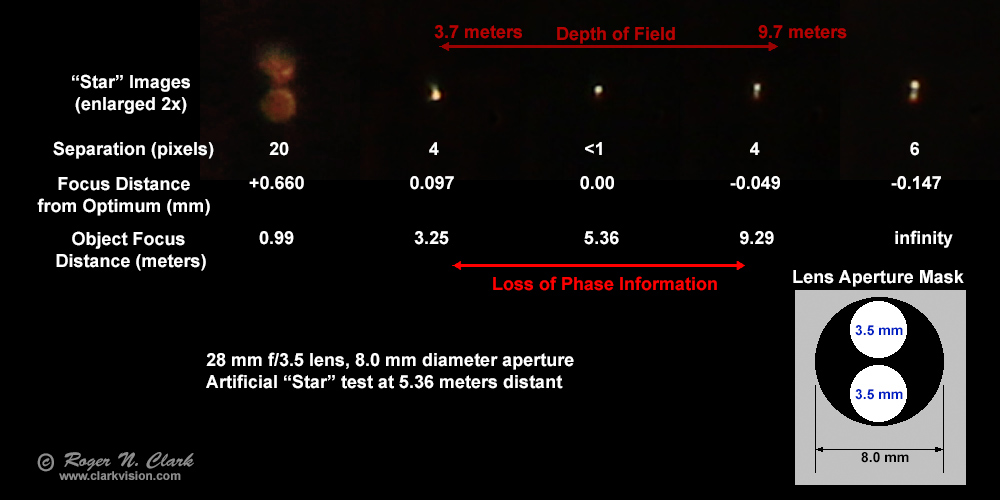

On-Sensor PDAF. The third method is a blend of PDAF and CDAF, but putting a form of PDAF on the sensor. For example, Canon splits some pixels in half and reads out the two halves separately to form a simple version of PDAF. Remember PDAF works in the out-of focus field. On sensor PDAF can only work when the image is out of focus (Figure 1). PDAF sensitivity is dependent on the separation of the phase between to two sides of the lens. If the PDAF sensors are close together, the range in the out of focus field where the phase information is useful is smaller. So the basic physics of on sensor PDAF means it works over a narrower range and also stops working when the subject is in focus. In those cases, the camera must switch to slower contrast detect autofocus, CDAF.

Mirrorless and more and more P&S cameras are relying on the third method of autofocus: CDAF and on-sensor PDAF. This is much better than the older system that could only do CDAF found on P&S cameras of a few years ago. In theory, the on sensor PDAF can often be as fast and as accurate as the PDAF in DSLRs. at least for initial focus acquisition. But tracking action is still a problem for the on-sensor PDAF, and will be by basic physics. When the subject is in focus, the on-sensor PDAF has no phase information (Figure 1), so can't be used to continually track a moving and accelerating subject accurately. From initial acquisition with the on-sensor PDAF, an initial velocity and direction might be measured and projected from there, but if the subject changes velocity (this means the velocity toward or away from the camera, which changes as a constantly moving subject changes direction), the on-sensor PDAF will not see the change until focus drifts off the subject.

The bottom line is that if you want to track action, whether sports, kids at play, baby's first steeps, birds in flight, or pets and wildlife running, the best performance will be by a DSLR and off-sensor PDAF with predictive autofocus. So no, DSLRs will not go away.

A problem with off-sensor PDAF is that it must be precisely calibrated for each lens. and that calibration can change with temperature. This calibration is called microadjustment. Lower end DSLRs may not include microadjustment capability (it is probably assumed that lower end consumer lenses will be used that are not as sharp as higher end lenses so microadjustment is not as critical). Here are Fast Simple on Site Microadjustment Methods Requiring no Computers or Charts.

Side Note on DSLR Microadjustments. It is possible for camera manufacturers to add a simple firmware microadjustment capability to all PDAF cameras that also have on-sensor PDAF and/or CDAF. I have posted this idea a couple of times on forums over the last couple of years, but so far no camera maker has caught on. The simple idea is to have a button that the user pushes to calibrate the microadjustment for a lens. When the button is pushed, the mirror is raised and a CDAF or on-sensor PDAF focus is done. The the mirror is dropped, and for the same area of the image that was used to focus on, the off-sensor PDAF measurement is made and the distance off from the CDAF/on-sensor PDAF is the microadjustment calibration. It should take only a few seconds for this to be done. Perhaps several cycles could be measured and an average calibration programmed in.

Live View is not Live! Another factor in moving subjects with mirrorless and P&S cameras that require live view to frame the image, is live view is really a delayed view and the faster the action, the harder it is to keep up. For example, if the sensor is being read out at 30 frames per second, that means action is 33 milliseconds ahead of where the live view image is. Action, like a running play in baseball or football, composition of the subject is difficult at best. Fast birds in flight can be near impossible. Use of live view also heats the sensor increasing noise.

In long exposure low, light photography, a significant noise source can be noise from dark current. CMOS sensors are not heated during the exposure, and in better digital cameras, some electronics are turned off to reduce heat near the sensor. The main heating in digital cameras (DSLR, mirrorless, point-and-shoot) comes from readout, both at the end of the exposure but more so from using live view. So for long exposure photography, use an optical viewfinder and minimize sensor heating from live view. With a mirrorless camera, it is harder to avoid heating because to see the image, including framing, live view must be used.

See this dpreview discussion with presents data that shows the sensor heating.

Sensor heating may be mitigated to some degree in future models. The move to 4K high frame rate video recording will generate a lot of heat, so methods to reduce and dissipate heat will be needed.

Other factors often cited in the buy Mirrorless or DSLR discussions are lower weight and size of mirrorless over DSLRs. Here is a discussion with charts on dpreview.com that shows some differences. The smaller DSLRs are similar size and weight to some mirrorless, so the size/weight difference is not a lot. For a given sensor size and lens performance (focal length and f/ratio), the lenses will be similar in size, so there is little savings there. Another factor is that because mirrorless uses more battery power through the use of live view for framing, battery life is shorter, so one needs to carry more spare batteries, which changes the mirrorless is lighter equation.

Also see this discussion on AF speeds on DSLR vs. Mirrorless on dpreview.

Mirrorless cameras are a niche area that are similar in size and weight to smaller DSLRs but can be smaller and less weight in some circumstances. If you have a requirement for only some wide angle lenses that are smaller than DSLR counterparts, then mirrorless can save some weight and bulk. But as your requirements for lens focal length increases, the lens size and weight dominates and will be about the same as those for equivalent sized sensor DSLRs. With mirrorless you give up fast tracking action performance in case photographing action is important in your photography.

The bottom line is before buying mirrorless to save weight and bulk, compare with the smaller DSLRs, especially if you already have a lens set for a DSLR. Understand the pros and cons of each and what you might give up in capability. Check the latest models as the market is constantly changing.

This kind of testing is used to assess aberrations of lenses and mirrors, and each type of aberration will change the zones around the lens that come to focus. I have used the knife edge test to assess aberrations when I am grinding and figuring optics, and for assessing quality of finished optical components and commercial optics.

So next, how close are the microlenses to the focus? Hamamatsu, a sensor manufacturer, shows the microlens no greater distance from the pixel than the pixel pitch. Pixel pitches are in the 3 to 6 micron range for modern digital camera. Canon also shows their on sensor AF dual pixel design with the microlens above the pixel by about the pixel pitch.

So with the microlens plus pixel of just a few microns separation at the focal point, and within the circle of confusion, which for the example I showed in Figure 1 is on the order of +/- 50 microns, there is no phase information, only information on aberrations.

Dpreview's article Olympus E-M1X vs Nikon D5: shooting tennis illustrates the failure of on-sensor phase detect autofocus in the Olympus camera versus the Nikon DSLR with off-sensor phase detect autofocus. "...the way in which the cameras missed shots is worth noting. With the Nikon, the very few shots it missed were usually toward the start of a burst and focus mostly corrected itself within a few frames. With the Olympus, slightly miss-focused shots seem to be sprinkled throughout otherwise in-focus bursts. This made picking my selects tricky - on more than one occasion that random missed shot coincided with my frame of choice."

When the subject is in focus, the on-sensor PDAF has no phase information (Figure 1), so can't be used to continually track a moving and accelerating subject accurately. From initial acquisition with the on-sensor PDAF, an initial velocity and direction might be measured and projected from there, but if the subject changes velocity (this means the velocity toward or away from the camera, which changes as a constantly moving subject changes direction), the on-sensor PDAF will not see the change until focus drifts off the subject. With the changing velocities of the tennis players in the dpreview test, we see the loss of phase and the subjects going out of focus, then the on-sensor phase is regained and a new focus is achieved. This in and out of focus repeats as the tennis players randomly move, changing velocity and the camera loses phase information when the subject is in focus.

The Mechanism Behind Fast, Comfortable Autofocus (Canon.com)

Jang et al., 2016, Hybrid Auto-Focusing System Using Dual Pixel-Type CMOS Sensor With Contrast Detection Algorithm, Imaging and Applied Optics Congress, https://doi.org/10.1364/ISA.2016.IW3F.2

References and Further Reading

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

http://clarkvision.com/articles/dslr-vs-mirrorless-cameras/

First Published May 3, 2015

Last updated April 30, 2019