A Revolution Coming to Photography

with Game Changing New Standards for Dynamic Range and Color Spaces

and Astounding New High Dynamic Range Display Technology

by Roger N. Clark

There is a revolution coming to Photography, and it has already arrived for video.

Photographers often talk about dynamic range and seek cameras with "high"

dynamic range. They then post process that high dynamic range into lower

dynamic range for display or print. But the real world IS high dynamic range,

even in a typical indoors scene. New display technology is able to

present images and video in a more natural looking dynamic range, and new standards

have been developed to take advantage of the dynamic range now and into the

future. This new forward-looking standard and technology is, to put it mildly,

jaw dropping, knock your socks off, and game changing. In this article, I'll

explain the standards and technology, show why it is game changing,

and where the technology stands for photography and video. Once you actually

see this technology, images and video on our current low dynamic range

displays and prints will look flat, lifeless, and limited in color.

The Color Series

Contents

Introduction

High Dynamic Range (HDR)

Color range (gamut)

Sound: Dolby Atmos and DTS:X

Display Resolution

Data rates and streaming video vs blu-ray disks

Chroma Sub-Sampling

More Technical details

Discussion and Conclusions

References and Further Reading

All images, text and data on this site are copyrighted.

They may not be used except by written permission from Roger N. Clark.

All rights reserved.

If you find the information on this site useful,

please support Clarkvision and make a donation (link below).

Introduction

A new stunning revolution in color imaging and video is underway.

There have been a number of revolutions in photography and videography

over the years, for example, the change from film to digital cameras, or

the change from CRT screens to LCD flat screens. The next revolution will

also be profound and it involves better color with more dynamic range.

It is amazing technology, as big as the change from black and white

to color in my opinion. And it is here.

If you are in the market for a new TV read this article and the next

one before purchasing.

When I first began to investigate this new technology, circa 2017, I did

not predict it would have such an impact. After all, it seems current

(at the time) computer displays and TVs had plenty of dynamic range.

I was stunned when I saw the first high dynamic range 4K movie

on a monitor that actually delivered true high dynamic range. The color and realism

was far beyond anything I had ever experienced in computer or TV displays.

Note: many so-called 4K HDR TVs are not actually high dynamic range.

There is currently only one technology that really shines in this regard

and that is Organic Light Emitting Diode, OLED.

In writing this, I can only describe the effects, because unless you

view the new technology yourself, it is somewhat like trying to describe

the advent of color TVs when you are only viewing with a black and

white monitor. Sure, we see in color, so can understand color versus

black and white. We also see in high dynamic range, so perhaps I can

try and describe the impact of the new technology.

There are 5 factors, each making a profound change in image and sound

quality, in order (in my opinion) of impact:

- 1) High Dynamic Range (HDR). The dynamic range of current displays

is quite low compared to the real world. Imagine looking at a real

sunset. The sun can be quite bright. Look at a piece of paper in

your hand illuminated by the setting sun: it may appear whitish but not as

bright as the sun. A photo or video of the scene shown on a standard

computer monitor or print has both the sun and paper at about the

same brightness, thus not the real intensities. HDR recording and

displays change that do show intensities much closer to reality.

Definitions:

HDR: High Dynamic Range. New technology, currently OLED is by far above other technologies.

SDR: Standard Dynamic Range. This includes most current LCD/LED flat panel computer monitors and TVs, as well as all print.

The human eye can see detail over about 14 stops, but we perceive

brightness over a much larger range. The extreme example is the sun:

we can perceive detail in bright sunlit snow as well as in shade cast

by a tree in the scene, and while the snow can appear uncomfortably

bright, we also see the sun as much much brighter than the snow.

Same with sun glint off water or metal--we see it as very bright but

photos showing the scene on a SDR monitor or print can't show that

brightness. Realism is lost. But HDR displays can get us closer to

that reality. The difference is profound. In fact the increased dynamic

range can give the impression of 3-dimensional, 3D. I have observed

this effect myself, and others have commented about this too.

There are multiple types of TVs and computer monitors, including

those saying they are HDR because they can accept an HDR formatted signal.

But many aren't really HDR having limited dynamic range (often less

than 1000, or 10 stops). The current top end HDR is Organic Light Emitting

Diode, OLED, which has dynamic range over one million! That adds

reality and 3-dimensionality to scenes close to the real view, and

has to be seen to really understand the impact. When this was first

described to me, I though Oh, OK, that's nice. I had to experience

it first hand to understand the truly jaw dropping effect HDR high

color gamut OLED displays can show. I have also reviewed and purchased

other technologies, and OLED is far above other technologies

as of this writing. I'll describe these differences below.

The high dynamic range on an OLED TV makes scenes much more realistic,

from glint off water, to lights in a room, to backlit hair, to a fire.

On a standard LED TV, 4K or not, a fire looks like a flat dull orange

blob. But on a high dynamic range display, the fire looks bright

orange, just like looking at a real fire with detail in the bright

dancing flames. You can see detail in the flames and they appear very

bright. Viewed in a dark room, the light from the fire flickers off

the walls, just like a real fire. The blacker blacks on OLED add so

much contrast at the low end, it makes many scenes look 3-dimensional.

I did not realize the difference until I saw it. A good 4K HDR movie

on OLED is simply stunning. Photos can be this way too.

Other HDR display technologies use what is called local dimming, where

the back light is dimmed for darker areas of a scene. But because

local dimming involves regional dimming over many pixels, a bright object

surrounded by dark areas will show halos. On cheaper TVs/monitors,

there are fewer dimming zones and the halo around a bright object may

look square! OLED avoids this because each pixel can be the full brightness

range from pure black to as bright as the pixel can get. That enables

edge contrast, pixel to pixel, unmatched by any other technology, and it

is that edge contrast that makes the difference.

- 2) Color range (gamut). The human visual system can show a marvelous

range of colors from UV to deep red, but prints and standard computer

monitors can not show that full range. That is changing with new

computer display technology with redder reds, greener greens, and

bluer blues. With current LED and CRT computer monitors, shades of

red are all a red-orange, and shades of blue are one blue. With the

new color standard (called Rec 2020) more reds show differences and

real reds look redder, like the real thing. Same with blues and

greens.

The blacker blacks of OLED have another effect. The color range

(gamut) of a standard monitor decreases as intensity drops, so

at the low end there is little to no color at all. For example,

a typical modern LED computer display that advertises close to 100%

Adobe RGB color gamut may fall below sRGB at 3 stops below maximum

brightness! This loss of color gamut is not reported by any review

site I have seen. This loss of gamut is not how we perceive a real

scene, with the scene turning gray into the darker parts of the scene.

Another problem is dark areas on a standard monitor/TV are gray, not

black. OLED with its blacker blacks due to its higher dynamic range

shows colors in dark scenes like we see in reality. It is a stunning

difference that I had no idea would be so profound because we have been

conditioned with the current low dynamic range technology for decades.

- 3) Sound: Dolby Atmos and DTS:X changes the surround

source from 2 dimensional to three dimensional. For example, a plane

flying overhead sounds like it is actually passing overhead. (minimum

7 channel surround). Immersive 3-dimensional sound is another step

toward realism. Imagine walking through a forest and hearing birds

singing in the trees above you. This is now possible to experience

in your living room. More specifically, these new audio formats

are object based, meaning the sound source is encoded to come from

a specific 3-dimensional position and the receiver interprets that

position with your speaker setup to deliver the closest sound it can

for that position. This is game changing.

- 4) Resolution (displays of 4K, 8K and larger). While prints can show

wonderful detail, computer monitors and TVs have been low resolution,

even so-called high definition (HD, 1920 x 1080 pixels = 2K). That is

changing with higher resolutions 4K (3840 x 2160 pixels),

8K (7680 x 4320 pixels) and larger (plus small variations on these

pixel counts). The fine detail of 4K+ monitors combined with richer

colors and high dynamic range combine to make a profound change in the

information content and realism the technology displays.

- 5) Data standards. The movie industry has been very forward

looking, setting standards for color and dynamic range that work

today and will still work with technology well into the future.

The standards include brightness along with dynamic range and color

so images and video produced today might be shown in even better

color, dynamic range, and intensity with newer display technology in

the future, without changing the current source format and data.

By this I mean if you encode your video or photo in one of these

new format standards, it can look even better on a future display

without changing your data file.

Put all of the above together and the change in computer/TV display impact

is jaw dropping. (I can't say jaw-dropping enough in this article!)

This revolution has been happening over the last several years and is now

quite mature in new TVs and all but low end computers (laptops are still

behind). The internet, as I write this, seems to focus on resolution (4K,

8K), and the video processing tutorials mainly focus on 10-bit color as

reducing noise. In my opinion, these ideas completely miss the point.

HDR output requires 10 or 12-bits per color to be effective, along with

the Rec 2100 standard. That is the game changer, not simply resolution

and noise. Given images with excellent light, composition and subject,

I would prefer 2K content in HDR than 4K content in SDR. For movies,

I look for 1) great story, acting, and cinematography, 2) HDR, and 3)

sound in Dolby Atmos or DTS:X. Resolution is 4th, and of course having

the first 3 plus high resolution (4K, 8K) just knocks your socks off so

far you may never find them!

All this new technology is still working to meet the forward looking

standards. The problem for electronics is data volume and data rates

and that is taxing current technology. In order to deliver the data,

compression is used. Data rate is also a problem even on your local

computer trying to deliver high resolution 4K and high dynamic range

content to your computer monitor. I will review the current status

and try and keep this article up to date as the technology changes.

Fortunately the technology has come a long way in the last couple of years

and prices have started to become more reasonable for high end imaging.

The new technology requires new hardware and software. HDR images

are 10 or 12 bits per color with specific coding for intensity.

Current image and video (SDR) is 8-bits per color with no specific

coding for intensity. The change from 8 to 10 or 12 bits per color

requires new hardware (e.g. new computer graphics boards, and new TVs),

as well as new software. Most TV's on the market these days have this

new capability to process high dynamic range video, but only OLED has

the true blacks pixel to pixel that show the astonishing impact.

Figure 1. The current industry leader for HDR content is the film industry,

followed by the gaming industry, and seemingly unaware of the new

technology (in 2020) is the still photo industry.

Note, this scene is a composite, both natural colors. The night sky was

obtained in deep twilight (sunrise is to the left) on the Serengeti, and the

giraffes were photographed after sunrise, also on the Serengeti.

Photographing the Milky Way in deep twilight is the one of the few

conditions when the night sky actually appears blue.

Now to more technical details.

High Dynamic Range (HDR)

Photographers have learned that High Dynamic Range (HDR) meant taking High

Dynamic Range images from a camera, sometimes using multiple exposures

of different exposure times and compressing that dynamic range into

the range that can be printed or displayed on a computer monitor of TV.

But the new High Dynamic Range (HDR), and the HDR discussed in this

article changes the term to mean the capability of the output device.

HDR is not simply brighter; it is more detail in the tonal range and the

tonal range is larger than SDR. For example, low cost LED HDR displays

may be able to present a brighter images than OLED, but OLED presents

much darker levels, so has a greater dynamic range.

Dynamic range of some different technologies are listed in Table 1.

Table 1.

DYNAMIC RANGE IS KEY TO REALISM |

| Display Technology: |

| Technology | Dynamic Range | Stops |

| Paper | ~1:30 | ~ 5 |

| CRT Monitor | ~1:100 | ~ 8 |

| LCD Monitor | ~1:few hundred | ~ 9 |

| LED Monitor | ~1:500 - 1000 | ~ 9 to 10 |

| LG Nano LED | ~1:1200 - 2400 | ~ 10 - 11 |

| Samsung QLED (2019) | ~1:3700 - 5700 | ~ 12 - 12.5 |

| OLED (2016+) | > ~1 million | > 20 |

| | ||

| Human Eye: |

| Within View | ~1:15,000 | ~ 14 |

| Adaptable within a scene | ~1:1,000,000 | ~ 20 |

| Total range of adaptation | ~1:1 to 35 billion | ~ 30 to 35 |

HDR is independent of resolution and color gamut. For example, many

movies and streaming shows are at 2K resolution and in HDR.

The Queen's Gambit on Netflix (2020) is one superb example.

There is no HDR "format war." There are several software standards, both open

and proprietary (licensed) and it is a matter of software to use those

standards. But in every case, even a proprietary standard, like Dolby Vision

will default to open standard HDR10 if the hardware does not have Dolby

Vision software.

HDR can be compatible with SDR displays.

There are standards for HDR video and HDR still images.

Currently, there are 5 different common video HDR formats.

- HDR10 Open standard, most common. 10-bits/channel

one mode for entire video stream (2015)

- HDR10+ Open standard, 10-bits/channel (2017)

changing modes per video frame

- Dolby Vision Proprietary ($3/TV). 10 or 12-bits/channel

changing modes per video frame

- HLG (Hybrid Log Gamma) BBC, NHK standard, 10-bits/channel, royalty free.

Some digital cameras record HLG.

- SL-HDR1 Technicolor +others (2017) (not used much yet)

16-bit tiffs and raw files can be developed into HDR.

Still image HDR formats:

-

HEIF High Efficiency Image File Format, is an open

standard, ISO/IEC 23008-12 (MPEG-H Part 12).

This file format is becoming widely used and can store

10-bits/channel in HDR format. Some Canon and Sony cameras

write still images in this format. Gimp, photoshop and other

software can read the files. There is currently (February 2022)

no browser support.

-

JPEG XL supports wide color gamuts and HDR like HEIF and can do

both lossy and lossless image compression. It too is free open standard, ISO/IEC 18181,

and is supported by ImageMagick, gimp, MacOS and has browser support

(Chromium, Firefox, both preliminary).

-

JPEG XT Open standard, open software, ISO/IEC 18477-5,7,8,9.

As of 2022, it does not seem to be catching on in a big way.

-

JPEG-HDR is part 2 of the JPEG XT standard. Open standard, open software, ISO/IEC 18477-2

As of 2022, it does not seem to be catching on in a big way.

Currently, no TV that I am aware of will read still image HDR formats,

only SDR JPEGs. To display still image HDR, one must convert to a video

stream with HDR. LG's OLED TVs do a pseudo HDR from 8-bit JPEGs that is

pretty good. More on this in the next article. We need pressure from the still image

photo community to get TV manufacturers to include these formats. We also

need the still image raw converters and photo editing software to include

these standards, along with Rec 2020 color gamut and Rec 2100 standards.

Color range (gamut)

There are multiple standards for color. For an introduction to modern color

standards and CIE Chromaticity, along with why these standards are not

standards for human color vision, see:

Color Part 1: CIE Chromaticity and Perception and

Color Part 2: Color Spaces and Color Perception.

These articles show that the standard CIE chromaticity is a significant

compromise originating from people not wanting to perform numerical

integrations on negative numbers by hand (circa 1930's), so they torqued the data to be all

positive then invented "approximation matrices" to try and correct

that perversion back toward reality. It works reasonably well for many

colors in the natural world, like for trees, rocks and soils, people's skin,

animal fur, but not as well for materials with unusual spectral shapes

(many synthetic materials), and departs further from reality for very

unusual things, like the true color of Rayleigh blue sky, to unusual

light sources, like neon lights or emission nebulae in the night sky.

The main color spaces in use are:

- sRGB A standard for web colors and (2K) HD video.

- Adobe RGB A standard for still photography.

- DCI-P3 A standard with color gamut similar to Adobe RGB; used mainly by the film industry.

- REC 2020 The idealized standard for 4K, 8K video.

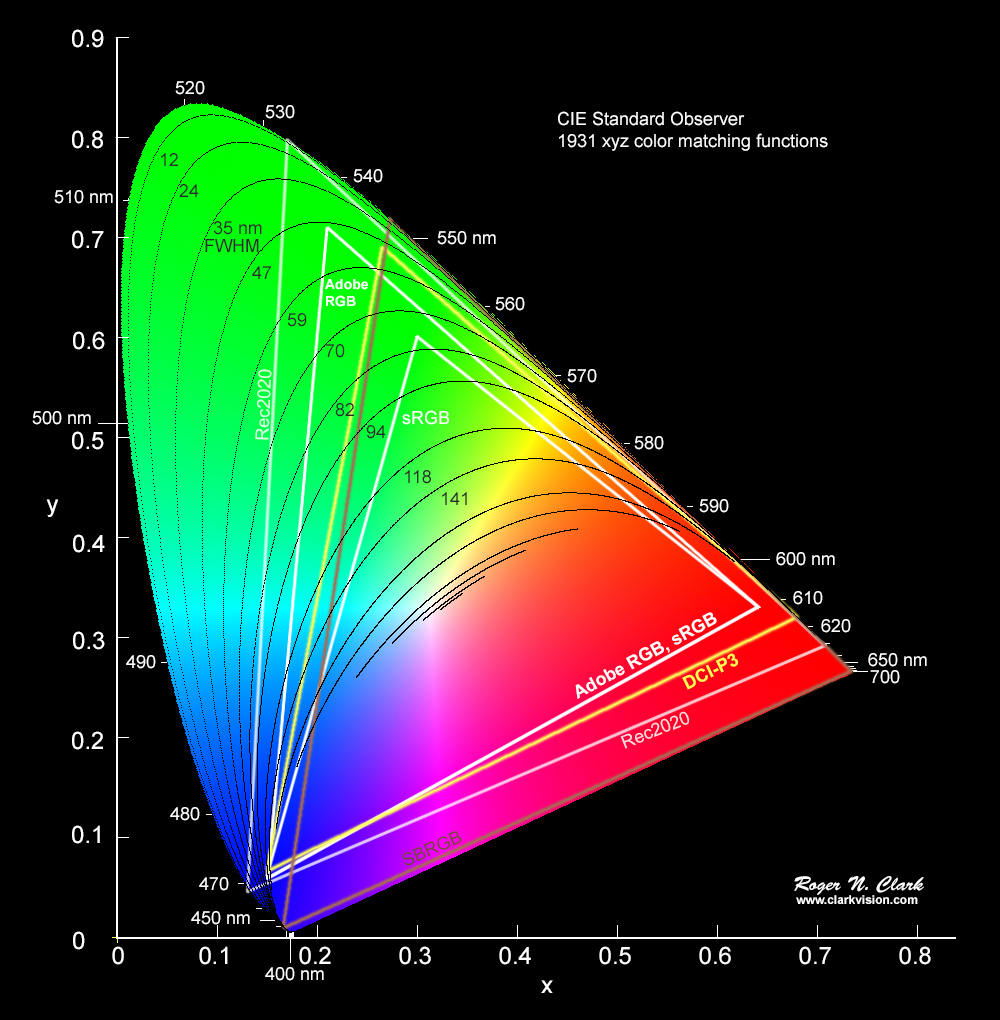

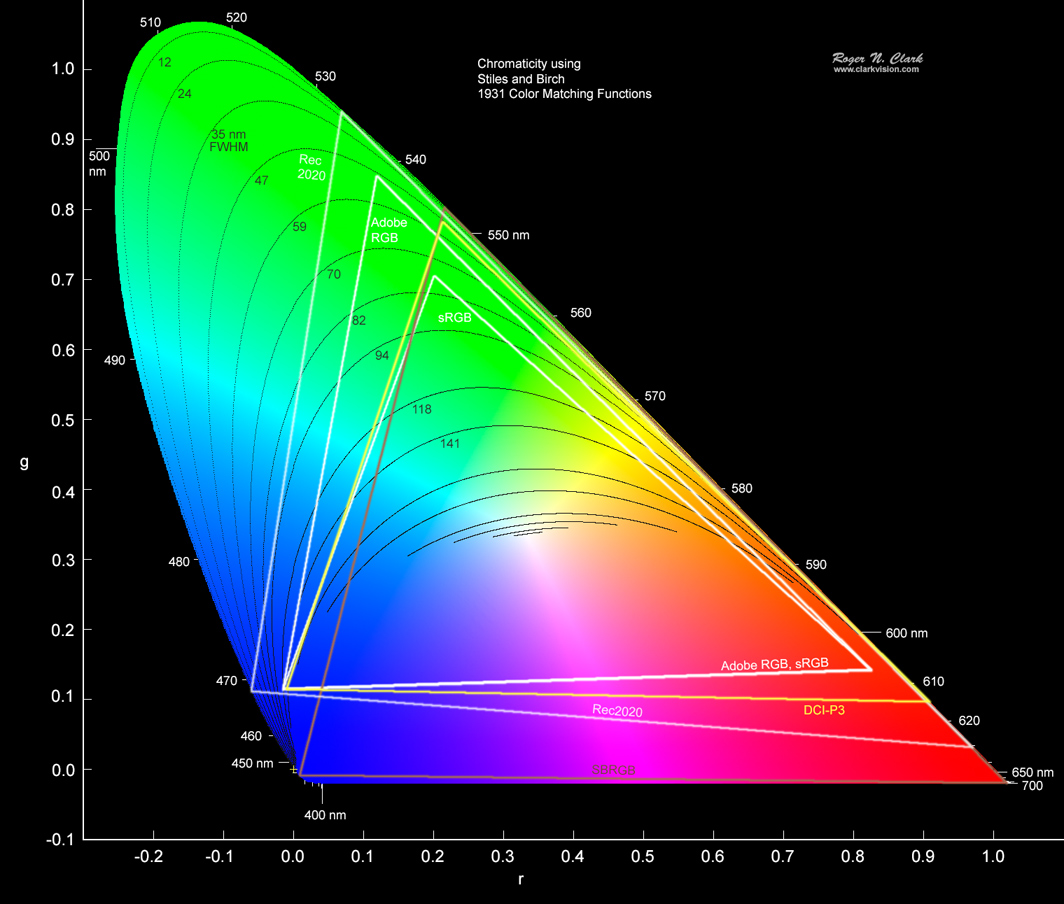

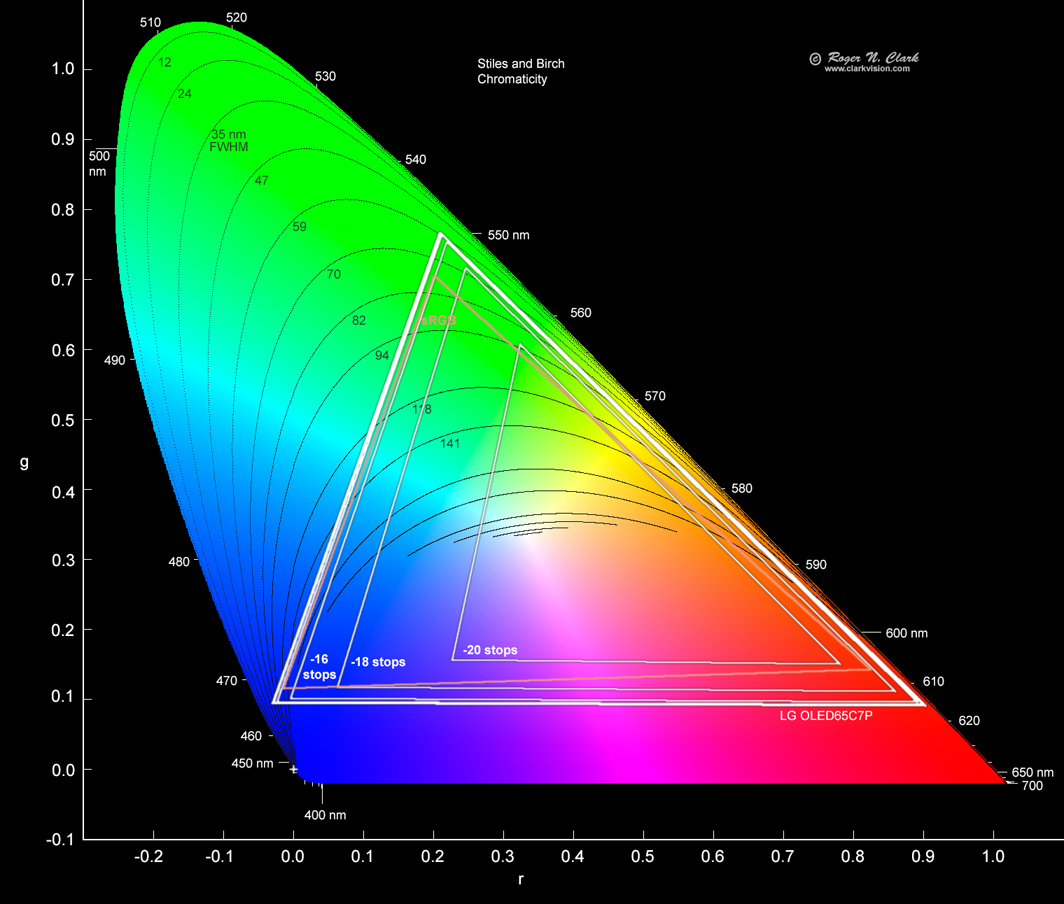

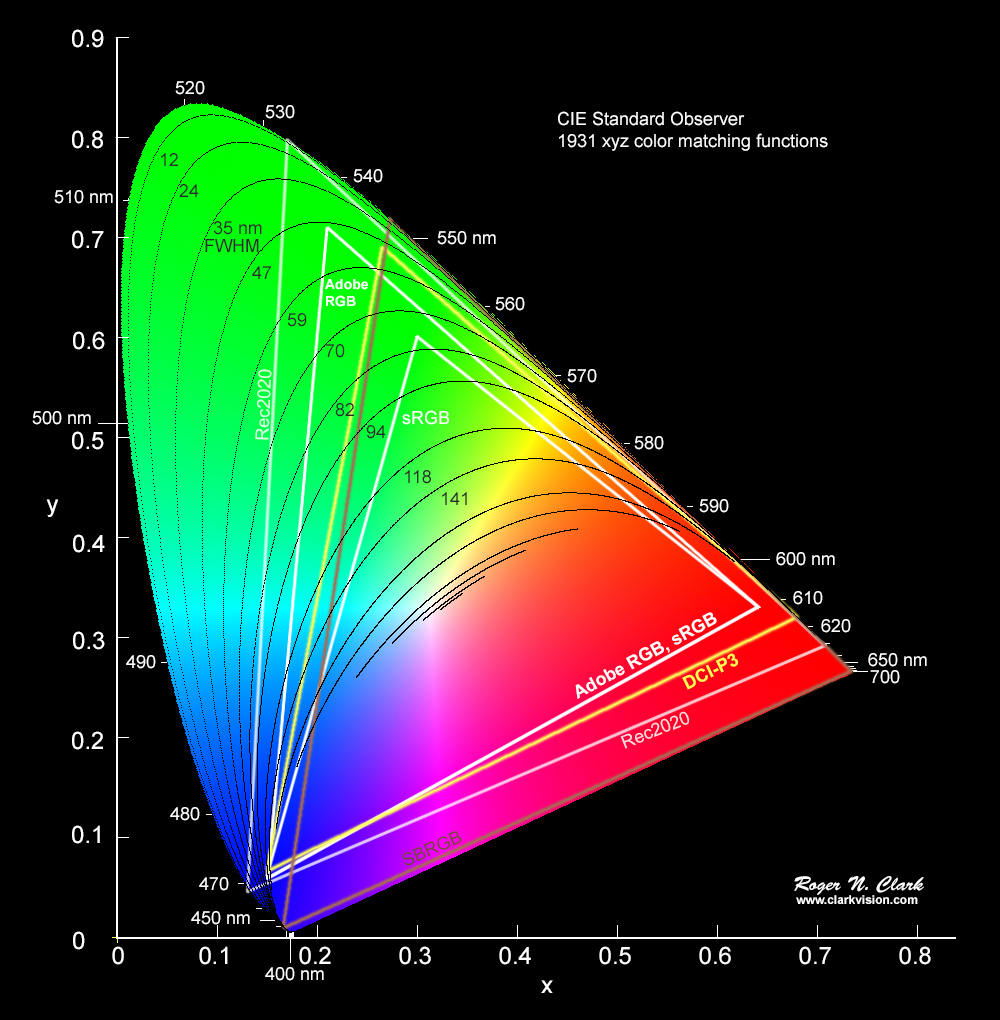

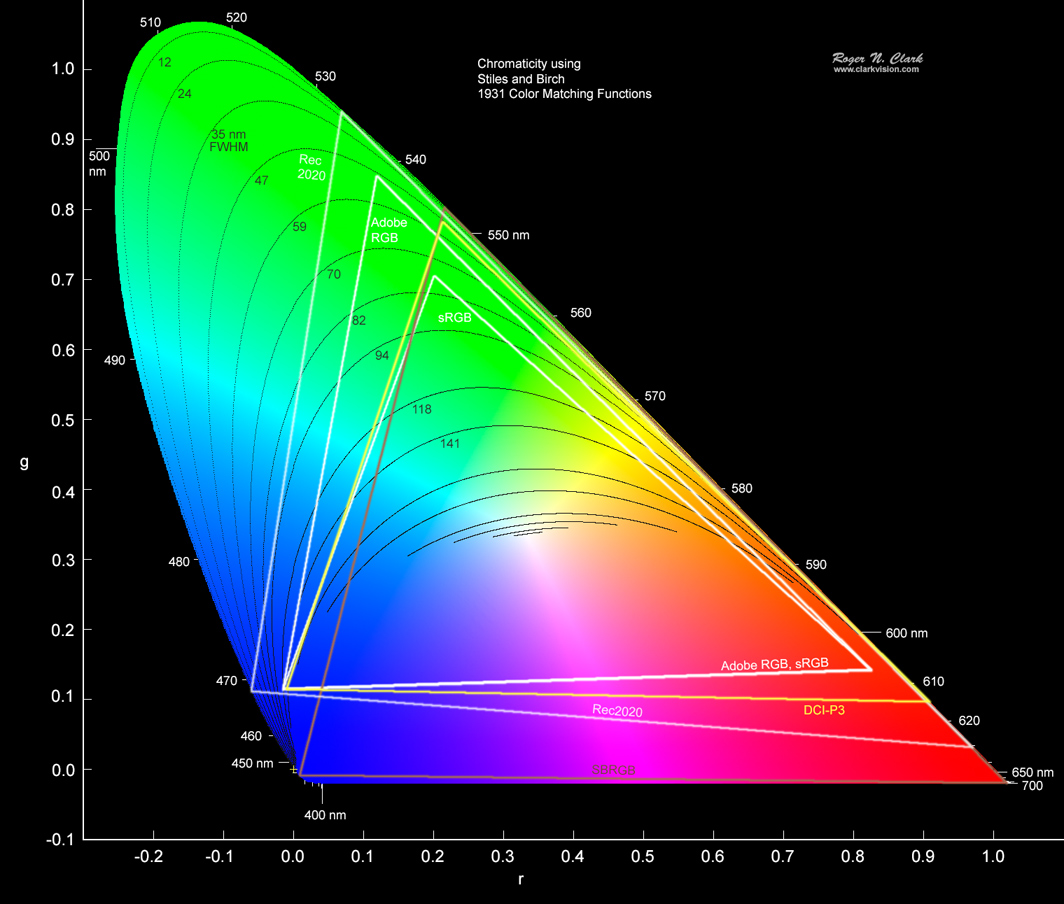

The color gamuts for the above standards are plotted on a CIE chromaticity diagram

in Figure 2a. As noted in the above articles, with the approximations of CIE chromaticity,

I prefer to show the color spaces on the Stiles and Birch chromaticity diagram

which has not distorted the original data on the eye. The diagrams are similar,

compare Figures 2a and 2b, but the Stiles and Birch chromaticity diagram better separates

the blues and reds. Note, if you are viewing these diagrams on a monitor calibrated in

sRGB or Adobe RGB, you probably can not see the difference among the deep blues

or deep reds. The triangle vertices denote the primary color wavelengths and

bandpasses (saturation).

Note that the Adobe RGB and sRGB blue primaries are the same, and the reds are the same, but the

greens are different with the Adobe RGB green much greener. DCI-P3 has about the

same blue primary, but redder reds and a green primary between the sRGB and Adobe RGB primary.

On an Adobe RGB or sRGB calibrated monitor reds look red-orange, especially compared to a

DCI-P3 monitor. This has led some people to complain that these new generations of

TVs look too saturated in the red. Ironically, the new TVs are closer to red accuracy than

the older generation sRGB and Adobe RGB monitors!

Figure 2a. The CIE chromaticity diagram with bandwidth contours (FWHM) and five color spaces are shown.

FWHM is Full Width at Half Maximum, the width of a Gaussian profile at half maximum.

This modern CIE diagram is an approximation of the original data for the eye color response.

See

Color Part 1: CIE Chromaticity and Perception

for more information on the approximations.

Figure 2b. Several commonly used color spaces are shown plotted

on Stiles and Birch chromaticity. SBRGB is the Stiles and

Birch 1931 color space, the original data from which the

CIE chromaticity was derived from using approximations.

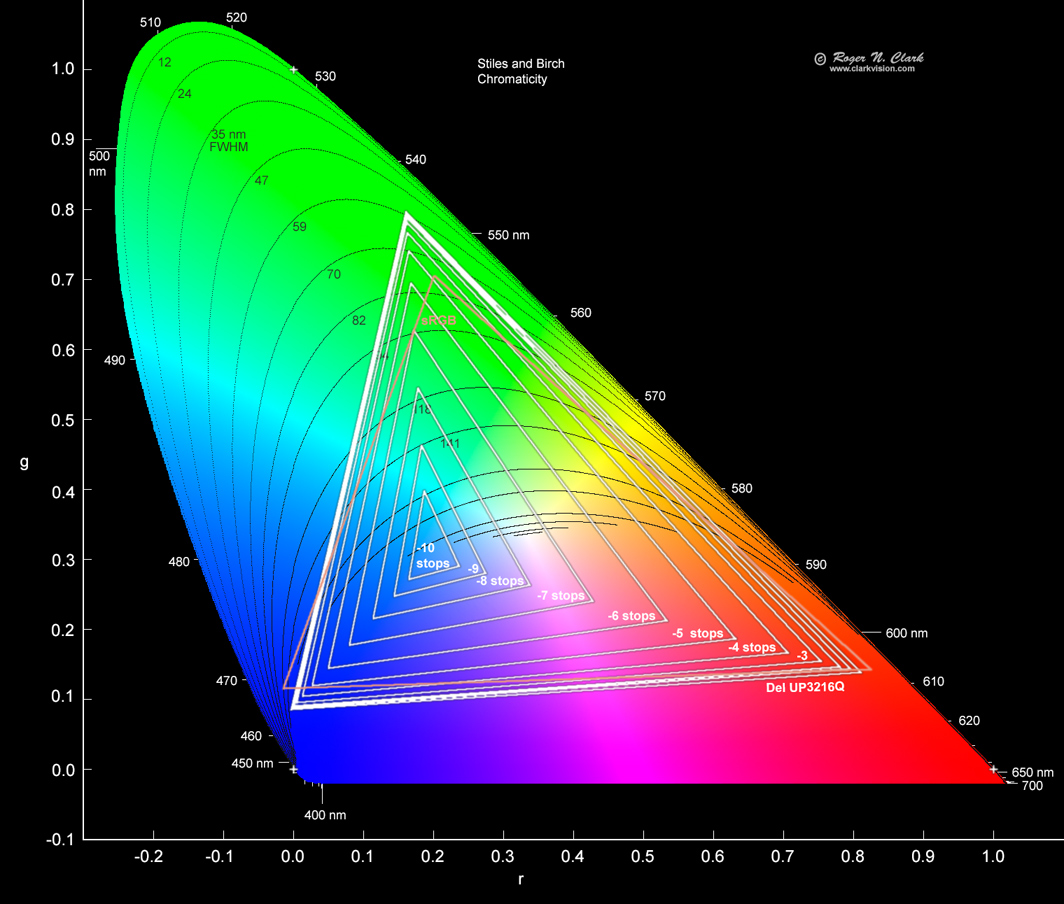

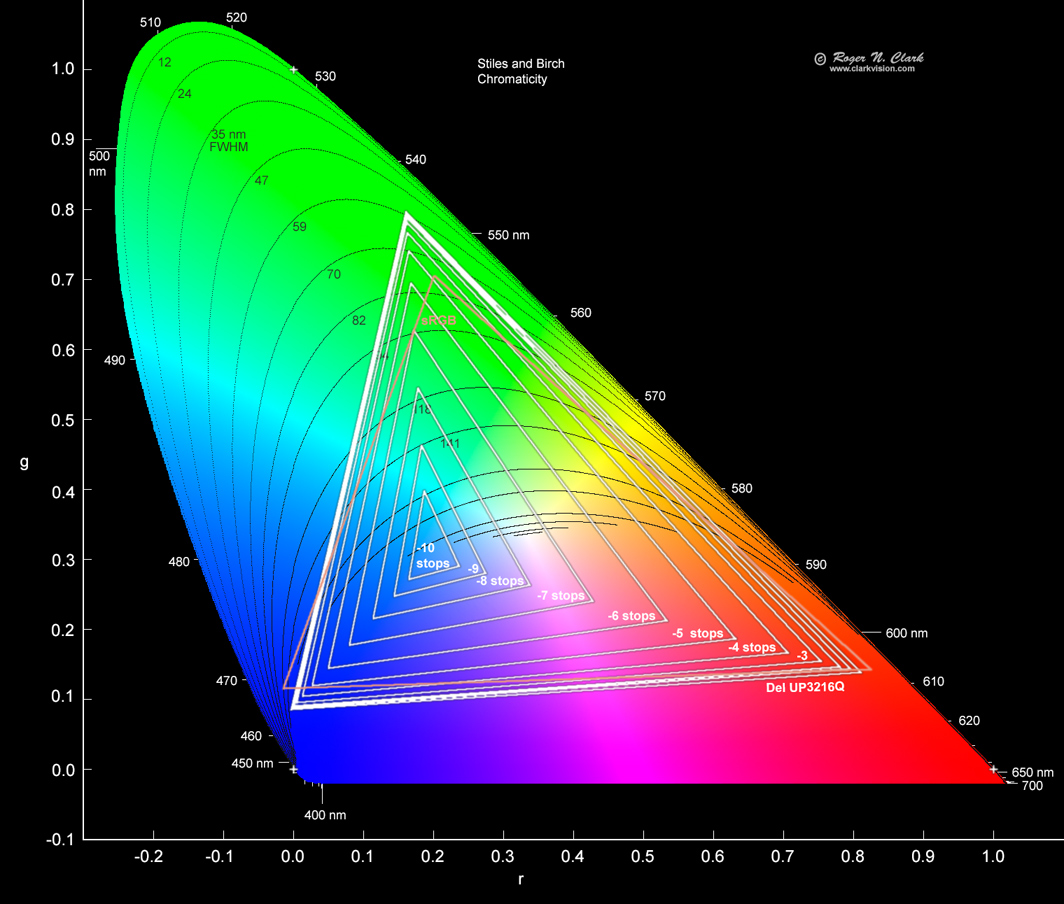

A typical backlit LED/LCD monitor is shown in Figure 3. Such backlit monitors have low dynamic range,

typically under 1000, and blacks appear as a dark gray. You can test this yourself by filling

you monitor with a black image (or a dark image with some actual black areas) and in a dark room

with no lights, does the black actually look black? On backlit monitors, it does not. More typically

we see a dark gray, or slightly off-color gray. This low contrast means that as

scene intensity decreases, so does the color range.

On the monitor shown in Figure 3, the color gamut drops below sRGB at only 3 to 4 stops below

maximum brightness! That isn't even mid-tones! Very dark areas will look a blue-gray.

Figure 3. Color gamut of a Del UP3216Q computer monitor. With the typical

black level of LCD and LED displays, black levels are not true black. As

scene intensity drops, the color gamut decreases, contrast is lost and

colors shift when the black level is not neutral. Here there is a small

shift toward blue/cyan as scene levels drop. Black level can also be

influenced by reflections off the display from light in the room.

NOTE: this is not unique to the Del monitor; it is a property

of the technology.

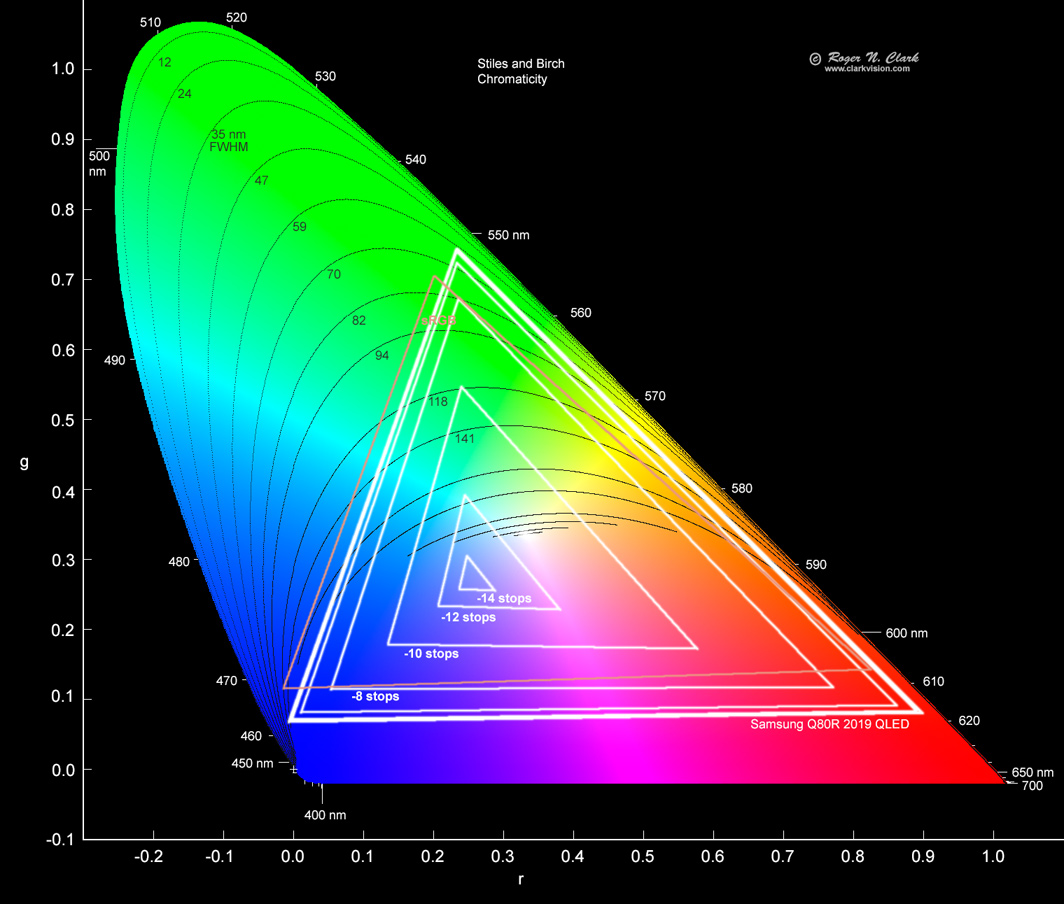

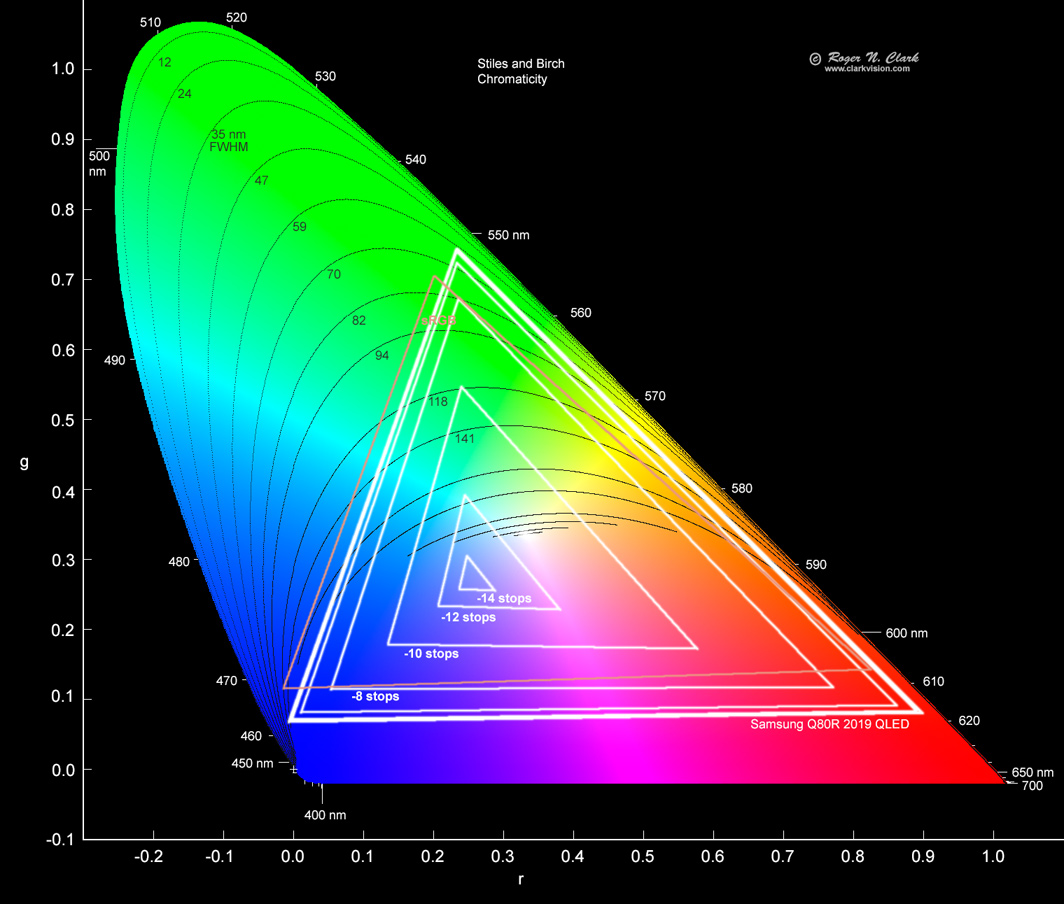

A QLED HDR TV color gamut is shown in Figure 4. Note that the red primary is near the DCI-P3

red vertex and this is specified as a DCI-P3 monitor. At about -7 stops the color gamut drops

significantly below sRGB. and the low end is abut 3 tops better than the Del monitor in

Figure 3.

Figure 4. Color gamut of an Samsung Q80R 2019 model QLED 4K HDR TV. The black level used for this

calculation is the "black on chart" spectrum shown in

Figure 9b at Color Part 2: Color Spaces and Color Perception.

For darker scenes containing NO bright objects, the gamut is larger, similar to the OLED in Figure 5.

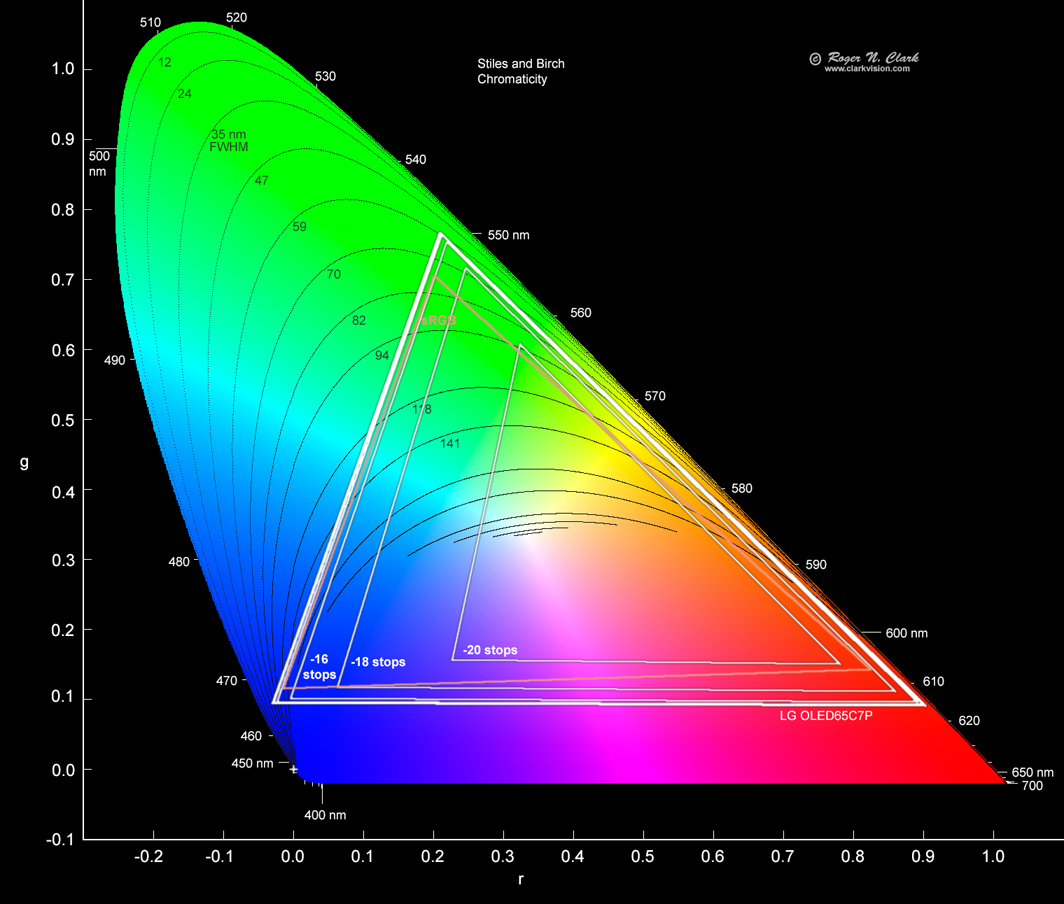

The LG OLED color gamut versus intensity is shown in Figure 5. It shows

excellent color, dropping significantly below sRGB at -16 to -18 stops.

If the monitor was in a room painted black and all people were wearing

black clothes it might be a little better. The measured low end was

limited by reflections of the test pattern off the walls of a dark

(no light) typical living room at night. Even at -18 stops, the color

is still strong, and that shows with great impact on perceived image content.

It has to be seen to understand what a profound effect this is.

Figure 5. Color gamut of an LG OLED65C7P 4K HDR TV. With the typical

black level of standard LCD and LED displays, black levels are not true

black, but it is on OLED displays. Because OLED displays have true black,

the residual black level may be influenced by light levels in the room

reflecting off the monitor. For this measurement, in a normal living room

at night, the only lights were from LEDS indicating power on some devices,

and from an internet switch in the corner of the room behind the TV. The

residual light level was less than 1 million times fainter than white

on the display (in standard dynamic range). That is amazingly good,

on the order of 1000 times better than a standard LCD/LED display. As

scene intensity drops on OLED, there is minimal impact color gamut,

contrast, and shifting of colors. The shift at -18 stops on the OLED

display is similar to the observed shift at -4 to 5 stops on the standard

LED displays (e.g. Figure 3).

Sound: Dolby Atmos and DTS:X

Another game changer for video is 3-dimensional immersive sound.

Imagine walking through a forest and hearing a bird in a tree overhead,

and from the direction of the sound, be able to pinpoint where that

bird is located. New 3-D immersive sound enables that in your home.

There are two technologies in common use: Dolby Atmos and DTS:X.

The advent of surround started after simple 2-channel stereo. Because we

cannot perceive the direction of low frequencies sub-woofers were added to

the 2 channel sound system, called 2.1, where the .1 meant the sub-woofer.

Next came 3.1, which added a center channel for voice. Next came 5.1:

center, left front, right front, left side/rear, right side/rear.

Then came 7.1: center, left front, right front, left side, right side,

left rear, right rear. Now another number is added, like 5.1.2, 5.1.4,

7.1.2, 7.1.4, etc. The added number is the number of overhead speakers.

The overhead speakers adds the 3rd dimension.

But the new channels are not simply multi-channel recorded tracks.

While some audio tracks can be recorded for specific channels for backward

compatibility, additional sounds are not coded for a specific channel,

but to come from a specific 3-D direction! The receiver must then interpret

the 3-D location and send sound to your speakers and which ones depend

on the direction the sound should come from and your speaker setup. I run a 7.1.2 system and the

3-D effect is impressive. I plan to move to a 7.1.4 setup in a couple

of years when receiver prices come down. Both Dolby Atmos and DTS:X

offer similar capability, and for a home system one probably can not

tell the difference. Dolby Atmos is proprietary and is licensed, while

DTS:X is an open standard.

Display Resolution

Table 2.

The coming revolution is in how we view images |

| Increased resolution (nice but not the revolution!!!!): |

| Name | Pixels | Megapixels | bits / color | SDR/HDR | When Common |

| VGA | 640 x 480 | 0.3 | 8 | SDR | 1990s |

| XGA | 1024 x 768 | 0.79 | 8 | SDR | ~2000 |

| 1080p HD | 1920 x 1080 | 2.07 | 8 | SDR | mid-2000s |

| 4K UHD | 3840 x 2160 | 8.3 | 8 | SDR | ~2016+ |

| | |||||

| New game changer is HIGH DYNAMIC RANGE, HDR: |

| 4K UHD HDR | 3840 x 2160 | 8.3 | 10 to 12 | HDR | 2018+ |

| 8K UHD HDR | 7680 x 4320 | 33 | 10 to 12 | HDR | 2020+ |

Data rates and streaming video vs blu-ray disks

With higher resolution and more bits per color mean more data per image and

more bit-rate for video. The uncompressed data rate for 4k 10-bits/color

video at 30 frames per second is (3840 * 2160 * 30 fps * 3 colors * 10

bits per color=) 7.465 gigabits/second (933 megabytes/second). Few have

internet speeds of that capacity, and that is even challenging for hard

disk drive speeds on a local high end computer. So compression is used.

Best quality for video (highest bit rate) is 4K Blu-Ray disks which have

a bit rate of about 100 megabits/second, meaning compression ratio of

7465/100 ~ 75. Streaming services in the US, like Netflix, compress

more, to stream around 15 megabits/second, thus compression ratios of

around 500 for 4K 30 FPS. The higher the compression ratio, quality is lost, both in

dynamic range and fine detail.

Table 3.

Video Data Rates, Uncompressed |

| | Pixels | Frames / Sec | # Colors | Bits / Color | Data Rate

Megabits / Sec. |

| 2K | 1920 x 1080 | 30 | 3 | 8 | 1,490 | |

| 4K | 3840 x 2160 | 30 | 3 | 8 | 6,000 | |

| 4K | 3840 x 2160 | 30 | 3 | 10 | 7,500 | |

| 4K | 3840 x 2160 | 60 | 3 | 10 | 14,900 | |

| 4K | 3840 x 2160 | 60 | 3 | 12 | 17,900 | |

| 4K | 3840 x 2160 | 120 | 3 | 10 | 29,800 | |

| 4K | 3840 x 2160 | 120 | 3 | 12 | 35,800 | |

| 8K | 7680 x 4320 | 30 | 3 | 10 | 29,900 | |

| 8K | 7680 x 4320 | 60 | 3 | 10 | 59,700 | |

| 8K | 7680 x 4320 | 120 | 3 | 10 | 119,400 | |

| 8K | 7680 x 4320 | 120 | 3 | 12 | 143,300 | |

| | |||||

| Media capabilities | Compression

for 4K 60 fps |

| Display Port 2.0 (2019) | 77.37 | 1 |

| HDMI 2.1 (released 2017) | 42.6 | 1 |

| Display Port 1.4a (2018) | 25.92 | 1 |

| HDMI 2.0 (released 2013) | 14.4 | 1 |

USB 3 hard drives: ~100+ Megabytes /second

(130+ on faster computers) | ~600+ to 1100+ | ~18 |

| 4K UHD HDR Blu Ray disks (released 2016) | 92, 123 or 144 | ~103+ |

| Netflix, Disney+ streaming | ~15 to 20 | ~1000 |

For Reference: jpeg still image compression:

~6 (high quality) to 16 (low quality) | | |

In my experience, Netflix 4K HDR streaming is a wonderful experience.

Disney+ seems to be better. But for real jaw-dropping knock your socks

off experience, 4K blu-rays are the way to go. Even most movie theaters

these days do not match the dynamic range of 4K HDR blu-ray on an OLED TV

(if one could go to a movie theater during this pandemic).

While streaming 4K movies online can be a wonderful experience,

compression limits image quality, losing fine detail and dynamic range.

Streaming at 15 to 20 megabytes per second is a distant second to the best

experience with highest data quality available from 4K blu-ray disks.

Blu-Ray disk players are under $200. Blu-ray disks vary a lot in price

from under $15 for disks that have been out for a while to typically

$24 to $35 for new titles.

If you decide to go 4K blu-ray, the first disks to get are BBC's Seven

Worlds, One Planet and Planet Earth II. Great movies for 4K HDR viewing

are listed in the next article in this series. Movies are being scanned

from film and remastered in 4K HDR. Movies shot on 65 mm film and

higher show amazing clarity, detail and dynamic range. Included in

this are Lawrence of Arabia, 2001 A Space Odyssey, the original Alien,

Interstellar, and others. Newer films shot on modern 35mm film also

transfer well, for example, Apollo 13. Older 35 mm film was grainier

and 4K shows the gain. Lawrence of Arabia was scanned and remastered

at 8K and the 4k blu ray transfer is stunning. And there are many more

to choose from. Modern movies are being shot on 6K and higher digital

movie cameras. Seeing these movies again, but in 4K HDR on OLED is a

transformative experience. Part 2 of this series will list both hardware

and media for examples to demonstrate this amazing new technology.

Photographers should start thinking how they will change their presentations

to take advantage of this new technology.

Chroma Sub-Sampling

Chroma sub-sampling is a method of compression. The idea is to reduce

color detail in blocks of 2x2 pixels to lower the data volume and

transmission time. This idea is similar to the way the human eye

works: less color resolution than intensity (luminance) resolution.

The main chroma subsampling methods are:

- 4:4:4 Full resolution of both luminance and color. This is what one wants for a computer monitor connection.

- 4:2:2 Color is 1/2 horizontal resolution, full vertical resolution, giving 1.5x lossy compression. (Rec. 601)

- 4:2:0 Color is 1/2 horizontal resolution, 1/2 vertical resolution,

giving 2x lossy compression. (jpeg, jfif, H.261, MPEG-1, MPEG-2, HD Blu Ray, 4K UHD Blu Ray)

For more details, see:

https://en.wikipedia.org/wiki/Chroma_subsampling

http://poynton.ca/PDFs/Chroma_subsampling_notation.pdf

https://www.rtings.com/tv/learn/chroma-subsampling

More Technical details

New technology, in the form of High Dynamic Range (HDR) TVs and monitors,

is now out at the consumer level with 20 and more stops dynamic range!

This revolution is being driven by the video film industry, and they

have standardized new color spaces and encoding methods for HDR displays.

Specifically, the standards are Rec 2020 and Rec 2100.

https://en.wikipedia.org/wiki/Rec._2020

https://en.wikipedia.org/wiki/Rec._2100

Prints have about a 5 stop dynamic range (a stop is a factor of 2) (Table 1).

CRT monitors raised that to 6 or 7 stops, then LCD and LED monitors raised

it to about 10 stops. All these are called Standard Dynamic Range, SDR.

The human eye can see about 14 stops dynamic range in a single scene,

but can quickly adapt to more than 20 stops as scene brightness changes.

With dark adaption, we can see things around 25 stops fainter than average

daylight, and during the daytime, we perceive detail in bright things a

hundred or more times brighter. The total range of human dynamic range

is on the order of 30 to 35 stops. What we see in images we produce

and display with today's technology, like an LED computer monitor,

looks flat compared to the real world.

Photographers commonly use Adobe RGB and sRGB color

spaces with a few using ProPhoto color space. I predict Adobe RGB and

ProPhoto will likely fall from use, as the film industry has the new

Rec. 2020 color space and Rec. 2100 dynamic range standards. New TVs

and computer monitors are aiming toward these standards for the film

and gaming industries. Rec. 2100 is a difficult standard to meet with

today's technology with a brightness range of 30 stops (one billion).

DCI-P3 seems to be the new emerging interim standard, which is close to but

different from Adobe RGB.

For an introduction to color standards and the approximations that started in the

1930s because people did not want to integrate functions with negative numbers,

see:

Color Part 1: CIE Chromaticity and Perception

Color Part 2: Color Spaces and Color Perception

The above Color Spaces and Color Perception article shows the changing

color gamuts of several computer computer monitors and TVs (See Figure

12 in that article). Also shown are spectral responses. These are not discussed by

most review sites.

Discussion and Conclusions

The motion picture industry has been moving to new color standards and higher

dynamic range for output devices. The standards are impressive and will

work well into the future. Devices, from cameras to TV and computer

monitors aiming towards these standards are already showing results

closer to real world views, and in higher resolution and dynamic range

than ever before possible. Home computers are also catching up in both

hardware and software capability. And the results are an impressive huge

leap forward. New technology is being developed for better displays so

that existing content will show even better on future hardware.

The still photo industry needs to catch up. Raw converter software

needs to include Rec.2100 standards and photo editing software needs

to also recognize the new standards. One can add the Rec.2020 and

DCI-P3 color spaces to photo editors if not already there. But the

photo editing software needs to write the HDR still image formats and

the TVs display images with those formats.

At present, the only way for a still photographer to display still images in HDR on an

HDR capable display (e.g. OLED) is to make a video clip and display the video.

Of course this takes up much more storage space, but that can be minimized by

specifying a slow frames per second, e.g 5 or 6 FPS. Note some TVs have lower limits

of low FPS video. Software exists to do this, for example, ffmpeg (free open source,

discussed in the next article).

Web browsers need to include the capability to display HDR content, including still

image formats and video.

In my opinion and experience, this new technology is such a profound leap in

visual experience, that I want to move to produce all my still images and videos in full HDR

output. Because the motion picture industry is moving so fast in this regard, display manufacturers

are racing to bring out new technologies for computer displays and TVs, that soon

everyone will have real HDR display capability. Just like 4K TVs are common

today and it is hard to find TVs not 4K capable, it will not be long before

HDR is common too. And I do not mean just accepting an HDR signal (which is

common already in most TVs), but actually able to show high dynamic range

output.

In the next article, I will review hardware and 4K HDR movies that illustrate

this new technology.

If you find the information on this site useful,

please support Clarkvision and make a donation (link below).

References and Further Reading

High-dynamic-range video (wikipedia)

Hybrid Log-Gamma (wikipedia)

Humphrey, j., 2018, High Dynamic Range. The Best TV Picture You've Ever Seen.

Broadcast and Professional Products Hitachi Kokusai Electric America.

Software for HDR images.

Rec. 2100 (wikipedia)

DTS:X vs. Dolby Atmos, The latest surround sound formats, crutchfield.com.

Notes:

The Color Series

http://clarkvision.com/articles/a-revolution-is-coming-to-photography/

First Published December 19, 2020

Last updated February 26, 2022