ClarkVision.com

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

Technology advancements for low light long exposure imaging

by Roger N. Clark

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

by Roger N. Clark

Digital camera technology continues to evolve enabling us to image fainter subjects with ease. Many of these improvements are not described by manufacturers in their literature, nor evaluated in many reviews. This article discusses the changes and how they might impact low light long exposure imaging and post processing. These changes mean that some aspects of post processing are no longer necessary.

The Night Photography Series:

Contents

Introduction

Calibrating a Sensor

Light frames

Dark frames

The flat field

The bias frame

Noise

Random noise

Pattern noise

The Calibration Equation

Limitations of Calibration

Technology Advancements

Sensitivity

Lower Dark Current

Lower Pattern Noise and Better Uniformity

Low end uniformity

On Sensor Dark Current Suppression Technology

Implications for Calibration

Dark Current

The Flat Field

Dark Current Caveat

Post Processing Advancements

Conclusions

References and Further Reading

The invention of the silicon photodiode over half a century ago as a light sensitive device and its development into arrays of photodiodes in image sensors, some 10 to 50 times more sensitive that film, has enabled lower light photography to flourish. The silicon photodiode is the light sensitive material in CCD and CMOS sensors in digital cameras. CCD versus CMOS is just a storage and readout mechanism to get the signals from the photodiode.

In general, silicon photodiode material has a peak quantum efficiency, QE, over 90%. Quantum efficiency is the fraction of light incident into the sensor that generates a usable signal. CCDs typically have QE in the 50-60% range for front side illuminated and often above 90% for back side illuminated devices. The loss in QE over the base silicon photodiode is due to wires and gaps between pixels that are not photosensitive. CMOS additionally has electronics in the pixel that takes up space that is not photo sensitive, lowering QE. By the use of mircolenses and internal pixel design, light can be focused to the light-sensitive parts of the pixels, boosting QE above 60% post circa 2013, comparable to CCDs that are front side illuminated. Back side illuminated CMOS sensors are also produced, but the manufacturing is more complex, so larger sensors sizes are typically only front side illuminated as of this writing.

But quantum efficiency is only one part of the story, especially for low light photography. When signals are low, the response of the sensor near zero signal is critically important. In a given exposure, with no light on the sensor, reading the sensor still results in noise and that noise, called the read noise, limits the faintest detection. The first digital camera, 1971 had a QE above 80% and used a 256 x 256 silicon photodiode array with a readout by an electron beam in a vacuum tube. The readout noise with no signal on the sensor was 1000 electrons. Later CCDs were developed and were revolutionary in low light detection with read noise in the 10 to 20 electron range. Later CCDs reduced the read noise to the 8 or so electron range. In the 1990s, the CMOS sensor was developed by Eric Fossum and his team at JPL. That put a transistor in each pixel to amplify the tiny signals from the photodiode, which helped reduce read noise. In the early consumer digital cameras, CMOS read noise was comparable to CCDs, in the 8 or so electron range. But the transistor blocked more light, reducing QE. Continued developments in CMOS sensor technology has driven read noise to below 3 electrons in most digital cameras by around 2013, and even around 1 electron in the better ones. And in research labs in 2016, CMOS sensors have read noise of less than 0.3 electron! That means on average from pixel to pixel--obviously, one does not detect a fraction of an electron.

But again, low read noise is only part of the story, and it is the next developments that have important implications for low light long exposure detection. What I will describe below are some of the little known factors in low light long exposure detection with modern sensors.

Some general background as to why I am writing this. I am a professional astronomer, having done digital imaging for decades, long before consumer digital cameras. I evaluate, calibrate, and use digital sensors for my work. Today I work mainly with NASA spacecraft instruments (studying objects throughout the Solar System), and NASA aircraft instruments (looking at the Earth). You can learn more about me and see my publications here. I mention this because I also do astrophotography and nightscape photography for fun. But as I keep abreast with the details of new technology and see how I can change my methods to do more with simpler methods, I constantly change and adapt those methods to make images. Oddly, many in the amateur astrophotography community who quickly saw the advantages of CCDs over film and adapted, have now become entrenched in the CCD workflow and criticize new methods. I often see some of these astrophotographers telling people starting out that they must do things a certain way if they want to detect faint things. Well, NO. Technology is advancing and processing methods from a decade ago may no longer apply, and in some cases, old methods are now actually detrimental to low signal detection. So in this article, I will detail the changing technology and when it might benefit you to use new methods.

The image in Figure 1 illustrates what is now possible. The image is of two bright galaxies (if you can call galaxies bright), which are surrounded by extremely faint nebulosity called the Integrated Flux Nebula, or IFN. The IFN is composed of dust particles, hydrogen, carbon monoxide and other gases, and is located at high galactic latitudes above the galactic plane. The light is reflected starlight from many stars in the Milky Way, thus the term integrated. The nebula is very faint and is a challenge to image. There is extended red emission from the gases in the nebula, plus blue reflected light from the dust, which can give varied blue, red and purple colors. The brightest portions of the IFN in this image are fainter than 25 magnitudes / sq. arc-second and fainter patches are at least 3 times fainter. At these levels, only 0.9 photon per pixel per 1-minute exposure were recorded on average from the "bright" IFN patch above M81 with fainter patches less than 1/3 that level.

Recording such faint levels in the past required hours of exposure time and rigorous calibration using dark frames, flat fields and bias frames. It would have been difficult to do unless the sensor was cooled and maintained at a stable temperature. Yet, this image was obtained with a stock consumer digital camera, running at ambient temperature with the sensor changing temperature by more than 10 degrees during the session (dark current doubles every 5 to 6 degrees C), and no dark frames, bias frames, or flat field frames were either measured, nor applied. And this image required only 47 minutes to obtain! So what changes in technology have enabled such an image to be obtained without the traditional baggage and calibration requirements? And what data processing enabled such low signals to be brought out?

First I will discuss the traditional workflow to calibrate an image from a digital sensor. This workflow was necessary when sensors first came out and it is still important today.

Light frames, L, are the measured subject, whether it is a portrait of a friend, or a faint galaxy, recording the light from the subject is fundamental. But the sensor is not perfect, so the image it records can have electrical offsets, the transmission of the lens can vary across the field of view, there can be pixel to pixel sensitivity differences (called Pixel Response Non-Uniformity, PRNU), and signals generated by thermal processes in the sensor (called dark current). Dark current varies with temperature and from pixel to pixel, causing a growing offset with time that can be different for each pixel. Frankly, it is a mess, and the data out of early sensors looked pretty ugly before calibrating out all these problems.

Dark frames are exposures with no light on the sensor to measure the growing signal from thermal processes (shutter closed and/or lens cap on). Dark current doubles for every 5 to 6 degrees Centigrade increase in sensor temperature. The amount of dark current generally varies from pixel to pixel and across the sensor. The amount of dark current in a sensor depends on the pixel design, but in general, smaller pixels have lower dark current. Besides the annoying false signal levels of dark current, there is noise equal to the square root of amount of the dark current. It, along with light pollution and airglow are the limiting factors in detecting faint astronomical objects. Dark current needs to be subtracted from the light frames. Usually, astrophotographers make many dark frame measurements to improve the signal-to-noise ratio by averaging many frames together. This is called a master dark. Astrophotographers often make libraries of master darks at different temperatures to document the changing dark current with temperature. The dark frame is subtracted from the light frame.

The flat field is made by measuring the light from a perfectly uniform surface. The flat field measures the relative light response through the optical system and the pixel to pixel response of the sensor (the PRNU). Generally, the amount of light through a lens or telescope decreases away from the center of the field of view and the flat field describes that fall-off. There could be dust on the sensor which reduces light throughput over a small region of the sensor. There could be pixel to pixel variations in sensitivity due to tiny differences in manufacturing the sensor. The flat field measures all these detrimental effects. The center of the flat field is normalized to 1.0 and the flat field is ratioed into the light frames to correct relative response differences.

The bias frame measures the electrical offsets in the system so you can determine the true black level. Cameras must include an offset to avoid low level data from being clipped, though not all cameras do include an offset. In theory, the bias is a single value for all pixels across the entire sensor. In practice, there may be a pattern in the bias signal from pixel to pixel. Like the dark frame, the bias is measured with no light on the sensor, but at a very short exposure time, like 1/4000 second. The bias frame is subtracted from the light frames and flat field frames to establish the true sensor zero light level. Note that bias is included with every measurement, whether light frame, flat field or dark frame.

Noise. Noise comes in two general ways: random and patterns.

Random noise is seen form several sources: noise in the light signal itself, equal to the square root of the amount of light collected. The ideal system is called photon noise limited, and most of the noise we see in our digital camera images is just that: noise from the light signal itself. To improve the noise, the only solution is to collect more light, either by a larger aperture, or longer exposure time.

Sensors also show random noise from reading the signal from the sensor, called read noise. After the sensor, the camera electronics can also contribute random noise, and the combined sensor read noise plus downstream electronics noise is called apparent read noise.

Pattern noise, sometimes called banding. Pattern noise can appear as line to line changes in level, and lower frequency patterns that can include things that look like clouds, ramps and other slowly varying structures across the image. Figure 2 shows an example of extreme pattern noise from a high end camera circa 2004.

Pattern and post sensor camera electronics noise is a function of camera ISO, or gain. ISO does not change sensitivity, it is a post sensor gain. Boosting gain (ISO) boosts tiny signals from the sensor above downstream electronics noise, so in general, one observes decreasing pattern noise and decreasing apparent read noise as ISO is increased. Thus, increasing ISO for the same exposure time and aperture actually improves (lowers) the apparent noise on the subject. This is an important realization in low light photography.

Pattern noise can also be reduced by calibration using flat fields, bias and dark frames. The general equation for calibration is:

Calibrated image, C = (L - D) / (F) (equation 1)

The flat field, F, must be bias corrected, thus measured flat field should have the bias subtracted: F = (measured flat frame image - B). Both the light frames, L, and the dark frames, D, include bias. Equation 1 requires the exposure times on L and D to be equal. If not, then the dark frame needs to be scaled, and before scaling the bias subtracted. I won't get into the details here, but scaling darks is only a partial solution.

In low light long exposure photography, dark current is a significant limitation, so its accurate removal is important. The problem is, what is the correct dark frame? As stated above, dark current doubles every 5 to 6 degrees C. Digital cameras, operating in the ambient environment vary in temperature. The temperature of a Canon 6D camera as a function of time in an astrophotography session is shown in Figure 3. When the camera is cold and imaging starts, the camera electronics at first warms the camera, changing the dark current. In long exposures, non-essential camera electronics are turned off, so there is minimal heating during the exposure. Heating mainly occurs when reading out the sensor, digitizing the signals and recording the data to the memory card. The LCD image review adds more heat. If live view is used, the sensor really heats up (avoid the use of live view as much as possible). Once heated, the sensor can take tens of minutes to cool back down. Small light cameras tend to cool faster, and large bulky ones (like the pro cameras) generate more heat from their multiple processors running fast and the camera bulk retains more heat longer.

So with dark current changing by factors of two every 5 to 6 C, and with uncooled cameras operating in ambient conditions with the temperature varying 7 to 8 degrees even when ambient temperatures are stable, what dark frame temperature should one use? NO SINGLE DARK FRAME accurately describes the dark current during such an imaging session like that shown in Figure 3, which is typical. Thus, making a master dark frame is only an approximation of the dark current from the sensor.

Further, there can be gradients in the sensor temperature, especially if there is a small breeze from one direction, preferentially cooling one side of the camera. So between changes in temperature, and potentially different gradients (even a 1 degree change across the sensor can be significant), dark frame subtraction is only an approximation on digital cameras running in ambient environments. The correction was perhaps only an 80 to 90 percent correction. In early digital cameras, with high dark current, one was left with remaining pattern noise that ultimately limited how faint one could get.

The situation was much better with cooled CCD cameras that amateur and professional astrophotograpers operated. With a stable temperature, master dark frames are much more effective, and it is that effectiveness that has driven the philosophy of how to process digital camera images today (2017).

Flat Field limitations. Flat fields are actually quite difficult to measure, but is of critical importance for extracting faint signals, especially in astrophotography. The light fall-off from a lens can be pleasing in a portrait of a person. but the light pollution and airglow must be subtracted, and if there is light fall-off, pixel-to-pixel variations in sensitivity, then the subtraction of the light pollution and airglow would need to be similarly varying and that would lead to errors in the result, again limiting detection of the faintest objects. Similarly, if the measured flat field is not correct, then the subtractions of light pollution and airglow do not go well, again resulting in limits to faint light detection.

After application of a poor flat field, remaining gradients are difficult to correctly remove. Is the remaining gradient due to light pollution so should be subtracted, or is the gradient due to an error in the flat field and thus needs to be removed by a multiplicative process? The wrong application can result in variable white balance with scene intensity.

Wide angle lens flat fields are especially difficult to measure. First, the lenses must be operated with the same settings as you image the subject with, e.g. at infinity for the night sky. Producing a large target (which does not need to be far away), that is perfectly uniformly lit to a fraction of a percent is difficult. Second it must be a perfect Lambert reflector or the brightness of the lit panel will vary with look angle even if uniformly lit.

The bottom line is that dark frame approximations with uncooled (unstabilized temperature) digital cameras and insufficient accuracy flat fields are some of the main limitations in calibrating images and thus limiting the detection of faint signals. Fortunately, technological advancements are coming to the rescue.

Sensitivity. As noted in the introduction, today's CCD and CMOS sensors use silicon photodiodes with peak quantum efficiencies (QE) greater than 90%. It is the non-sensitive materials on the sensor that lowers QE. CMOS was further lowered in QE by the electronics that occupied some space in the pixel. Early consumer digital cameras had QE below about 30% and today (circa 2016-2017) QEs in some cameras are above 60%. Figure 4 illustrates the advance in technology. With the newest cameras reaching around 1100 electrons per square micron range at ISO 200, there has been a sensitivity improvement from circa 2003 to 2016 of a little less than 3. With QE's now above 60%, there is only room for about another 50% improvement.

Lower Dark Current. While sensitivity has improved almost 3x over the last dozen years, read noise has been lowered, and in some recent models, dark current has been lowered a significant amount (Figure 5). With the introduction in 2014 of the Canon 7D Mark II, dark current in an uncooled stock consumer digital camera is now at similar levels as some cooled CCD cameras. How many other cameras have achieved this same or better low dark current level requires more testing, which has not been yet been done to my knowledge.

Lower Pattern Noise and Better Uniformity. At least as significant as the increase in sensitivity and the lowering of read noise and dark current is reduction in pattern noise (e.g. banding). The increase in sensitivity, decrease in read noise and dark current would mean little if the pattern noise seen in cameras from the early to mid 2000s were still present. Sensor manufacturers have greatly refined manufacturing techniques such that pattern noise in the better cameras is almost non-existent

Digital cameras through the 2000s improved, surpassing 35 mm film in resolution and as megapixel count increased even surpassed medium format film. The improvements came in multiple areas, but perhaps very significant was the pixel to pixel response uniformity (PRNU). This means that one can image a white snow scene, or other elegant scene with subtle shades and out of the camera comes a wonderful image with no evidence of PRNU. There is no need for the professional photographer on a fashion shoot, the wildlife photographer imaging polar bears in a snowstorm or the astrophotographer to worry about making flat fields to correct their images to reduce PRNU. The bottom line is the modern digital camera is impressively uniform with high signal levels.

The low end of the intensity range in astrrophotograpphy is where the faint galaxies and nebula signals are located, and those signals are superimposed on a pedestal of light pollution and airglow. The light pollution and airglow must be subtracted and the small signals amplified. To subtract the airglow and light pollution and extract the faint deep sky astro signals, the calibration of the flat field must be excellent, along with the PRNU at the low end must be very low. Even a few percent error in the flat field intensity distribution will translate into a large signal and inadequate or over subtraction of the light pollution and airglow and that will compromise detection of the faint signals. If pattern noise is superimposed on this error, pretty much all hope is lost in making a clean image. With the low PRNU in modern cameras, and as we shall see, excellent modeled flat fields that one can apply, the flat calibration is superb in many cases without the photographer needing to measure flat fields. (If you have a telescope, you will need to measure flat fields, but not with commercial lenses.)

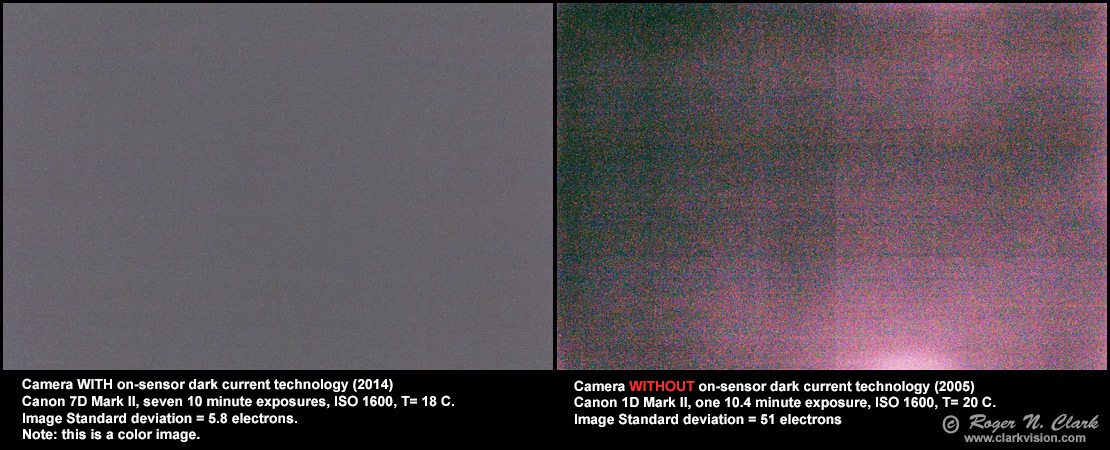

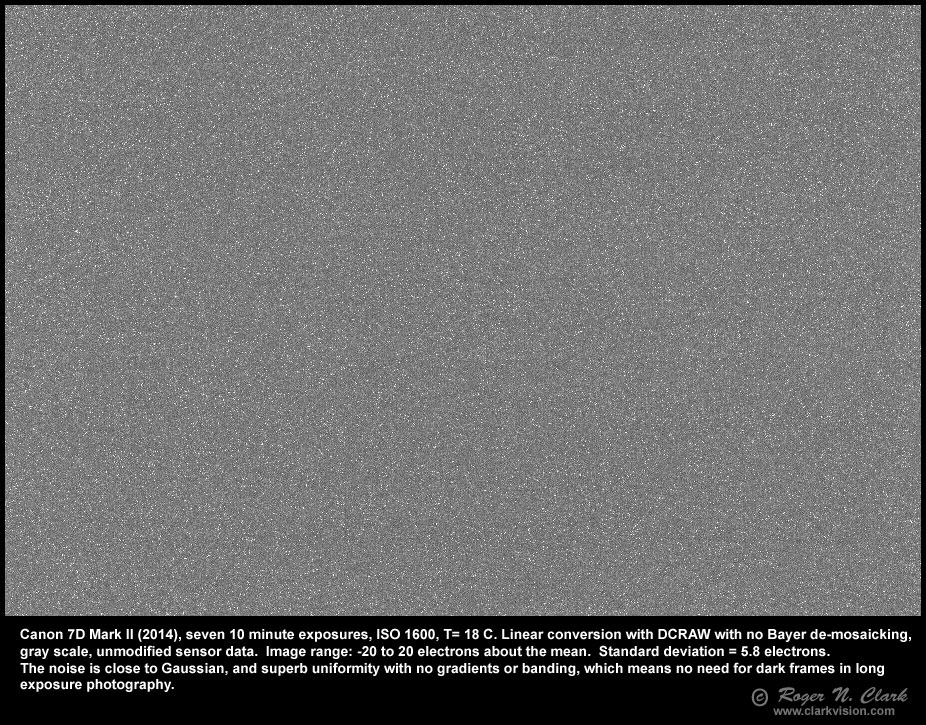

Low end uniformity has improved too. The low light long exposure uniformity is illustrated for two cameras in Figure 6. No longer do we see amp glow (the pink around the edges of the frame in the image in Figure 6, right). Besides lowering the dark current, vastly improving pattern noise, there is one additional amazing new technological development: On Sensor Dark Current Suppression. This was discussed in a previous article: On-Sensor Dark Current Suppression Technology.

On sensor dark current suppression is an electronics technology in each pixel that reduces dark current level during the exposure, and in the best implementations, completely subtracts the dark current. On Sensor Dark Current Suppression is not long Exposure Noise Reduction software. It is not software. It is not firmware. It is part of the hardware pixel design and is not software or firmware that can be turned on or off.

The big advantage of On Sensor Dark Current Suppression Technology is that this is DARK CURRENT SUBTRACTION IN THE PIXEL DURING THE LIGHT EXPOSURE! This means there is no no need for doing separate dark frame subtraction with an approximation of the temperature. The dark frame is subtracted at the exact same temperature as the light frame because they are done simultaneously.

All this means that by suppressing dark current inside the pixel during the exposure on the subject, there is no problem of mismatching temperature of dark frames made at other times that may have been made at slightly different temperatures. This technology results in the amazing smoothness out-of-camera of the 5-minute dark frames shown Table 4 of my Canon 7D Mark II review, especially when compared to older cameras without the technology (Figure 6, right panel), and in the impressive smoothness of the 10-minute dark frame shown in Figure 6.

In a very long exposure, the dark current suppression is so good in the Canon 7D Mark II, that 70 minutes of exposure at room temperature still shows no amp glow or pattern noise (Figure 7). Such low noise in such a long exposure means objects where only a few photons are recorded in 70 minutes can be detected. And that is exactly the case in the Integrated Flux Nebula detection in Figure 1.

Not all new cameras have on-sensor dark current suppression technology. Lower end, entry consumer cameras may have cheaper sensors that do not have this technology.

For more information on this topic see Part 7b) On-Sensor Dark Current Suppression Technology.

Now let's put all the above knowledge together to see how the calibration of long exposure low light images, like astrophotos, might change.

Remember equation 1: C = (L - D) / (F) (equation 1)

Dark Current. The first obvious change is that with On Sensor Dark Current Suppression Technology being dark frame subtraction, out of camera the dark current, D, is now zero, so the equation now becomes:

C = L / F (equation 2)

For cameras with On Sensor Dark Current Suppression Technology there is no longer a need to measure dark frames (caveat below).

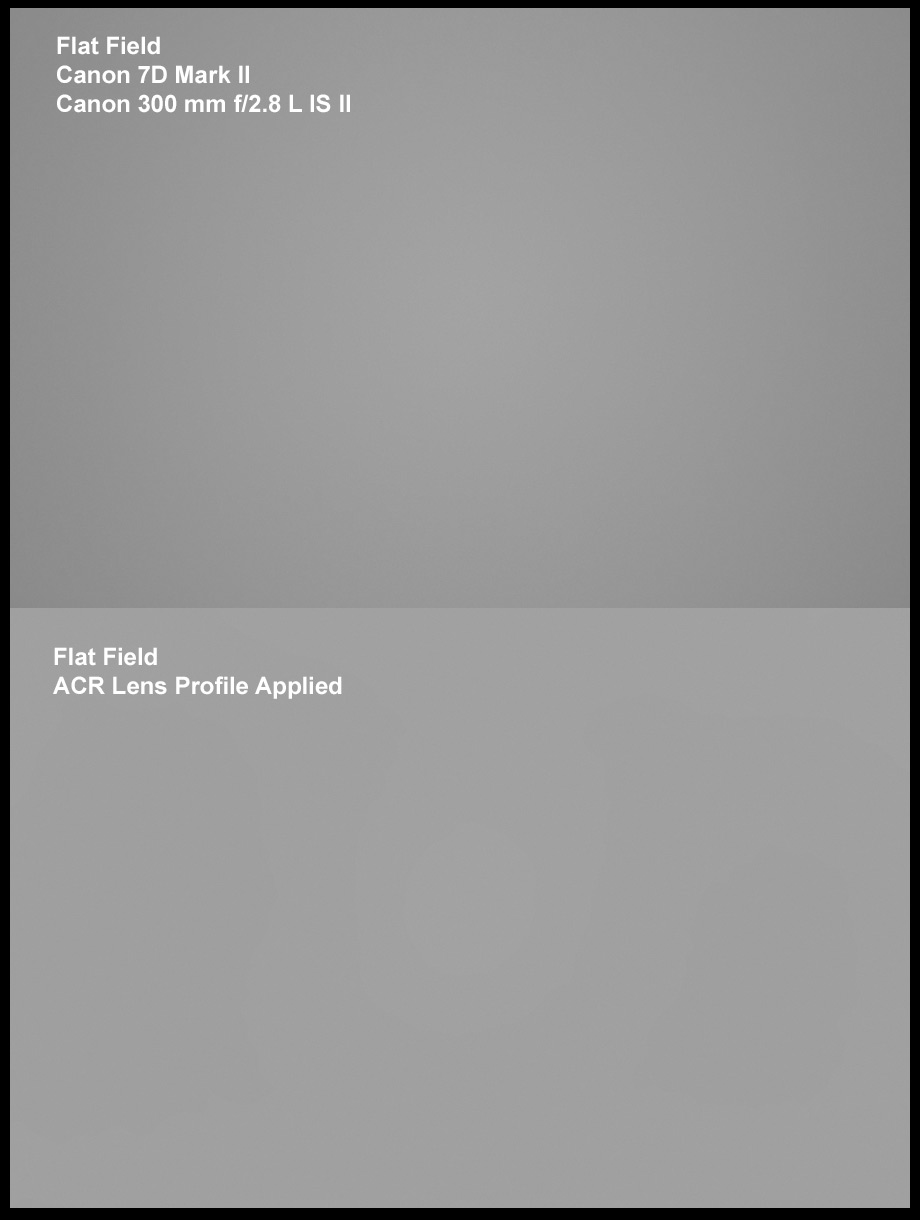

The Flat Field. With improvements in PRNU, the flat field now mainly serves just 2 purposes: correcting for light fall-off, and correcting for dust spots from dust on the filters above the sensor. Digital cameras now include a shaking system to shake off most dust. If you practice good lens changing strategy it will minimize dust contamination. For example, I image the world over, including the dusty Serengeti, and rarely have a dust problem. The image in Figure 1 was made in December 2016, and the camera, which also made the dusty Serengeti image as well as many other images in harsh environments, has never had the sensor manually cleaned. There is no evidence for dust spots as shown in the flat field in Figure 8. The camera was purchased in 2014, has traveled the world, including the dusty Serengeti, and the flat field was measured in 2017. The only cleaning done is the internal shaking of the filters over the sensor when the camera is turned on or off, or when I select the shaking from the menu system.

With a clean sensor, effectively no PRNU, that leaves the flat field sole purpose to correct for light fall-off (Figure 8).

Dark Current Caveat. With uncooled digital cameras, working in hot environments can still result in some low level pattern noise even with on-sensor dark current suppression. Depending on the camera and the maturity of the on-sensor dark current suppression technology you may see some pattern noise. Multi-exposure stacking with offsets can mitigate the problem, or dark frame subtraction in a more traditional environment might be beneficial. I have imaged with the Canon 7D2 at 33 C with no dark or bias frames and had only minimal degradation, for example: The Cone Nebula.

While the hardware technology in digital cameras has been evolving, so has the software to handle the data from the cameras. Many raw converters now include lens profiles. Lens profiles include a model flat field. Chromatic aberrations can be corrected and noise can be reduced. The sensors include masked pixels at their edges and the raw converter reads those masked pixels to determine offsets.

The latest research on image reconstruction is showing an advantage to putting more things into the raw converter. For example, see this recent review: Goossens et al., (2015) An Overview of State-Of-The-Art Denoising and Demosaicking Techniques: Toward a Unified Framework for Handling Artifacts During Image Reconstruction (pdf).

Important to note for those used to traditional calibration, as noted in equation 2 above, dark current subtraction has been moved into hardware on the sensor and is applied at the exact temperature of the pixel on the linear data. The flat field is now in the lens profile in the raw converter and is applied to the linear data from the sensor. Some cameras now even include the lens profile in firmware in the camera which can be applied to the in-camera generated jpeg.

The modern raw converter also reads the bad pixel list saved in the raw file and better algorithms will skip over those pixels during raw conversion. That can produce a better product with fewer artifacts around those bad pixels. Traditionally, the argument for dark frames is to also measure hot pixels. The bad pixel list can be updated in the field, at least on some cameras, further reducing the need for dark frame characterization of hot pixels. Updating the bad pixel list was discussed in Nightscape and Astrophotography Image Processing Basic Work Flow.

If your lens/telescope is not in the lens profiles, then you can add it yourself. That of course

requires measuring the flat field. For example, for Photoshop and ACR, Adobe

has a profile creator:

The public archival format for digital camera raw data (including Adobe Lens Profile Creator) at

https://helpx.adobe.com/

Adobe Lens Profile Creator at http://supportdownloads.adobe.com/detail.jsp?ftpID=5490

So, putting this all together, equation 1 with the new sensor and post processing raw converter

advancements, means the equation now effectively reduces to equation 3 from the user perspective:

C = L (equation 3, though modern raw converter).

This means that between the sensor and the raw conversion software, excellent calibrated images are now possible without the traditional calibration procedures of measuring dark and bias frames, nor flat fields (with profiled lenses).

The great news for astrophotography and other low light long exposure applications is that with the advancements in sensor technology and post processing raw conversion, the traditional workflow for calibration has been moved into the sensor and raw converter. Further, the improvements in pattern noise means the calibrations are better than ever before possible, enabling cleaner images and fainter objects to be detected with less effort.

The improvements from the early 2000s to mid 2010s are quite impressive. The quantum efficiencies have improved about 2x, perhaps a little more. Read noise has decreased some 3 to 10x and pattern noise even more. The largest improvement for long exposure low light photography is the improvement in dark current, and the new dark current suppression technology. This means if you have one of the early 2000 digital cameras and you upgrade to one of the newer models with these advanced features, you should see an improvement in low light detection as much as 10 or more times. That means what took 10 hours of exposure in the early 2000s might be done today with an hour or less total exposure time.

One of the top low light long exposure cameras with very low dark current is the Canon 7D Mark II 20-megapixel digital camera I have not seen data for any camera with lower dark current than the 7D2. The PRNU of the 7D2 sensor is a fraction of a percent: less than 0.5% on linear data; less than 0.1 % root mean square (RMS) out of Adobe Camera Raw (ACR) raw converter.

References and Further Reading

Alarcon et. al., 2023, Scientific CMOS Sensors in Astronomy: IMX455 and IMX411

Publications of the Astronomical Society of the Pacific 135:055001 (15pp).

https://doi.org/10.1088/1538-3873/acd04a

direct link to pdf

For a good review of the evolving state of CCD and CMOS technology, see: A Review of the Pinned Photodiode for CCD and CMOS Image Sensors http://ericfossum.com/Publications/Papers/2014%20JEDS%20Review%20of%20the%20PPD.pdf

See the section on dark current, page 39 left column: "Negative bias on TG during signal integration can help draw holes to under the PPD edge of TG and suppress dark current generation from Si-SiO 2 interface states [83]-[85]."

Note too that the author states on page 35: "widespread adoption of the PPD in CMOS image sensors occurred in the early 2000s and helped CMOS APS achieve imaging performance on par with, or exceeding, CCDs."

Here is an example astrophoto with no darks with a 60Da: http://www.nightanddayastrophotography.com/gallery/urbanm27nodarks.html

Technology Evolution and Debugging of CMOS Image Sensor

Technology Evolution and Debugging of CMOS Image Sensor

http://www.hbgk.net/en/TechSolutioninfo.aspx?ffaid=491&faid=1378&lan=2&hffaid=485)

1.3 Dark current suppression technology: Dark current is produced by microcrystalline defect or leakage current on CMOS and pixel charge is accumulated and increased in the case of long exposure or temperature rise, which causes CMOS to produce noise. For this reason, Canon Company adopts the architecture of "buried photodiode" to reduce probability of noise.

Clarkvision.com Nightscapes Gallery.

The Night Photography Series:

| Home | Galleries | Articles | Reviews | Best Gear | Science | New | About | Contact |

http://clarkvision.com/articles/dark-current-suppression-technology

First Published January 17, 2017

Last updated July 12, 2025